Role

Everything — product design, ML integration, backend architecture, frontend, auth system, achievements engine, deployment, and the submission video.

Constraints

One-month hackathon window, solo build, no budget for paid ML APIs, and a requirement to ship a judge-ready product — not a demo. Weight estimation from a 2D image, label ambiguity from the model, and auth reliability under edge cases were the hardest technical constraints.

Problem

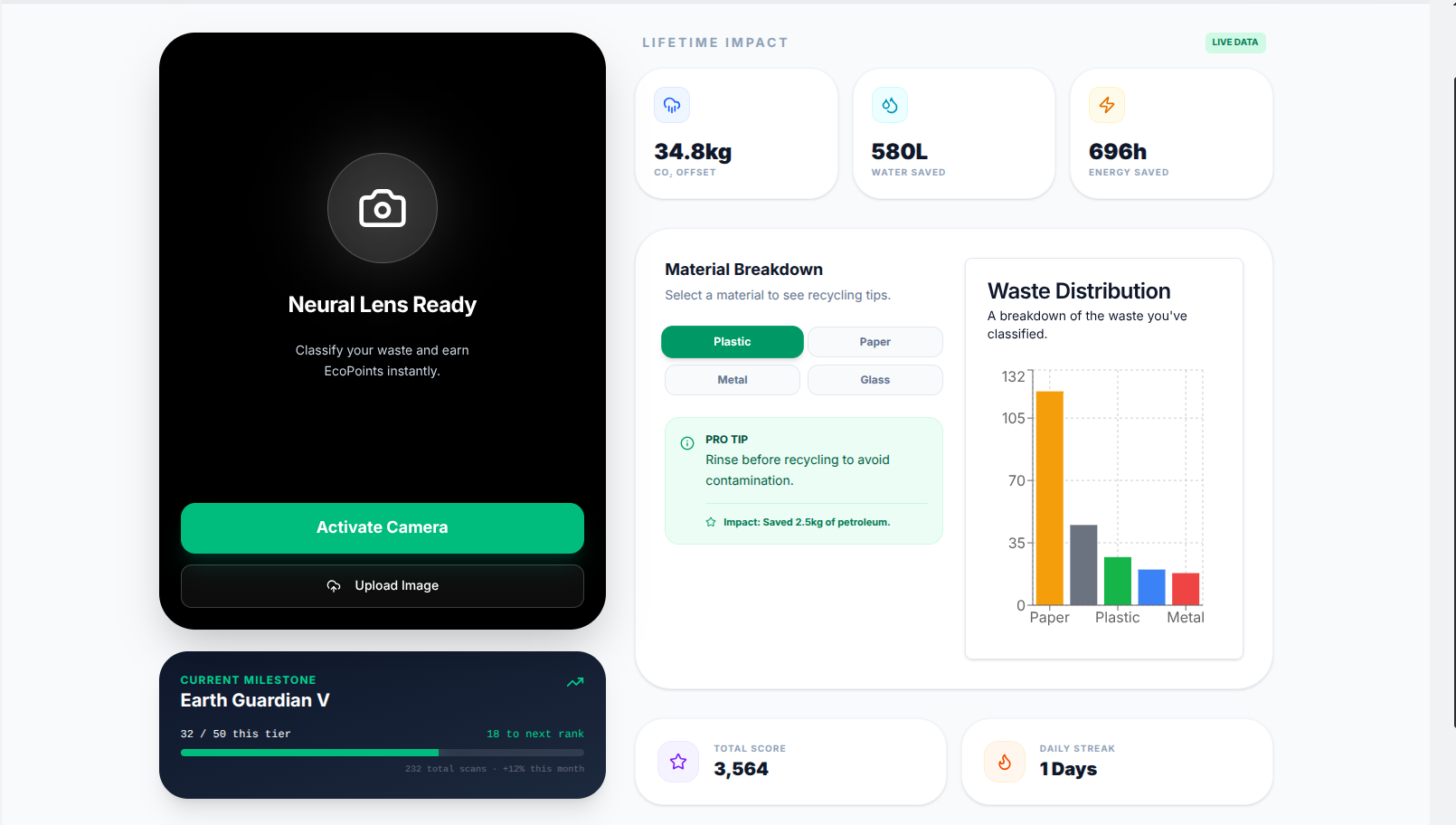

Most people want to recycle responsibly but face two blockers: they don't know what material something is, and they have no sense of whether their effort matters at scale. Existing recycling apps either give dry information with no feedback loop, or require manual input. EcoLens was built to solve both: real-time AI classification plus cumulative impact that compounds over time.

Approach

- Started with the core loop first — get one scan working end-to-end before building any gamification

- Chose HuggingFace Spaces for the ML endpoint to stay within zero budget with no cold-start penalty

- Kept impact math conservative and transparent — approximate weight ranges per category, documented multipliers, no inflated numbers

- Built auth last but made it robust: OTP + JWT + token rotation + transactional rollback — the kind of detail that breaks apps at demo time

- Submitted a structured video covering the problem, live demo, and architecture overview — knowing presentation is scored equally alongside code

My responsibilities

- Designed and built the full prediction pipeline: client image → POST /api/predict → HuggingFace proxy → label normalization → point & impact calculation → MongoDB write → frontend update

- Built a custom achievements engine with material-specific badges (plastic, paper, glass, metal, organic) and a global leaderboard with real-time rank updates

- Engineered OTP-based email verification with SMTP, JWT auth in HTTP-only cookies, and token rotation in /api/user — including transactional user creation with rollback on email failure

- Implemented conservative environmental impact formulas: CO₂ = (estimated_weight_g / 1000) × EF_kg/tonne, with parallel calculations for water saved and energy saved

- Handled low-confidence scan suppression — scans returning 'unknown' or below confidence threshold are not logged, keeping impact data honest

- Designed a responsive UI with camera and upload flows, a lifetime impact dashboard, and Framer Motion animations — then iterated mobile UX through multiple rounds to reduce permission and cropping friction

Technical solution

- Next.js (App Router) for the full stack — serverless API routes handle prediction proxy, auth, achievements, and leaderboard

- HuggingFace Space hosts the image classification model; results are proxied server-side to normalize labels and strip raw confidence scores from the client

- MongoDB stores users, scan history, achievement state, and leaderboard rankings — Mongoose handles schema validation and transactional user creation

- JWT in HTTP-only cookies with token rotation on every /api/user call — SMTP OTP for email verification via Nodemailer

- SWR for client-side data fetching — dashboard and leaderboard update reactively without full page reloads

- Framer Motion for scan result animations, badge unlocks, and dashboard entrance sequences

- Tailwind for consistent, responsive UI across camera, upload, dashboard, and leaderboard views

Architecture notes

Fully serverless within Next.js — no separate backend process. Client sends a base64 image to POST /api/predict. The route proxies to the HuggingFace endpoint, receives labels + confidence scores, maps the top label to one of six categories (plastic, paper, glass, metal, organic, other), computes points and impact using estimated weight ranges per category, writes the scan to MongoDB, updates the user's cumulative stats and achievement state, then returns the enriched result to the client in a single response. Low-confidence results and 'unknown' labels are rejected before the write step — keeping impact data honest. Auth is handled via /api/user: OTP is generated and emailed on registration, verified on the next call, and a JWT is issued into an HTTP-only cookie with rotation on every subsequent authenticated request.

Outcome / Results

Placed 3rd internationally at Hack for Humanity 2026 — solo build, 775 participants. Judges scored the project on impact, innovation, design, and presentation. The predict → persist → notify pipeline held up under demo conditions, auth edge cases were handled, and the leaderboard and achievements gave the project a live, working feel rather than a static prototype.

Proof points

- 3rd place finish at Hack for Humanity 2026 — 775 international participants, solo build.

- Full ML pipeline shipped within a 1-month hackathon window with zero paid API budget.

- Auth system handles OTP verification, JWT rotation, and transactional rollback — no auth failures during judging.

- Conservative impact formulas with confidence-gated scan logging — data integrity over inflated numbers.

Lessons

- Integrating ML is more than wiring up a model — UX around low confidence, error states, and mobile camera flows determines whether the product feels real or broken.

- Small auth details (cookie naming consistency, token rotation, OTP edge cases) break apps more reliably than mis-tuned models. Get auth right before building features on top of it.

- Conservative math builds more trust than impressive-looking numbers. Judges and users both notice when impact estimates feel inflated.

- Gamification works — the leaderboard and badges gave the project energy during the demo that a plain scan tool would not have had.

- Presentation is half the score. A clear problem statement, live demo, and architecture walkthrough in the video matter as much as the code.

Placement:🏆 3rd Place — Hack for Humanity 2026Participants:775 international participantsBuild:Solo — design, ML integration, backend, frontend, deployment

Want to see the full pipeline in action or discuss how to integrate ML into a production web app? Check the live demo or reach out directly.